Create Connection

Connect ekai to your data warehouse to begin the Schema Agents workflow. ekai establishes a secure, read-only connection to profile your data and generate ERDs.

Add a New Connection

- Navigate to Schema Agents in the sidebar

- Click the + Add Data Connection button

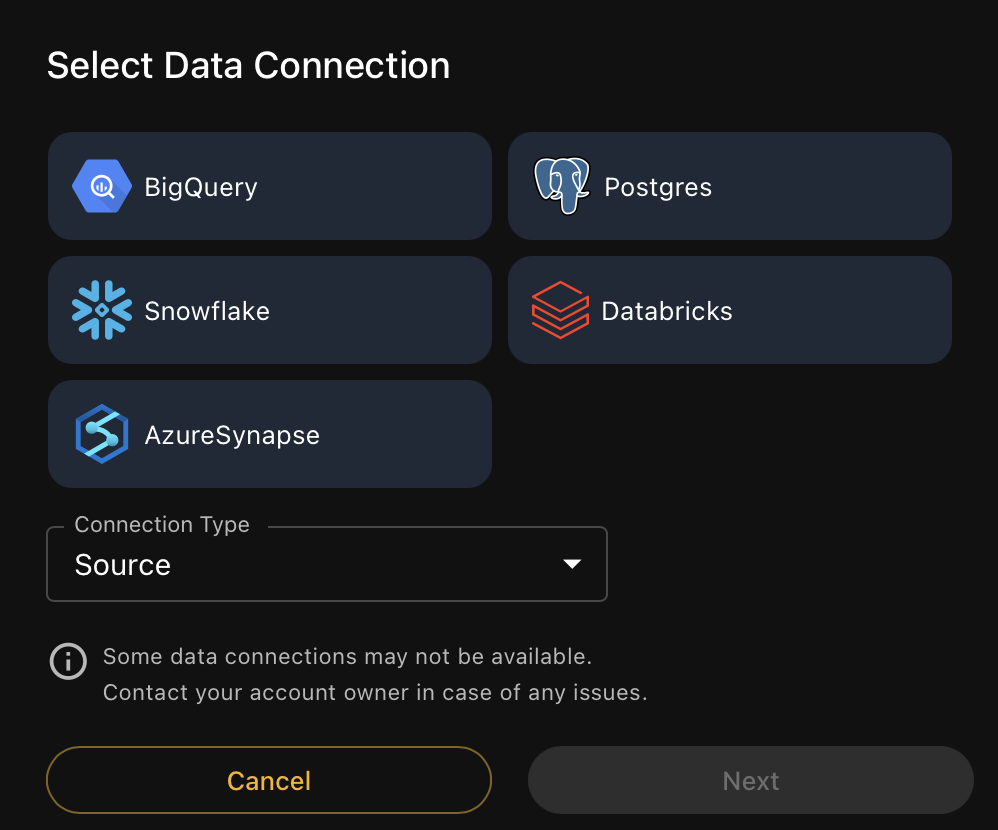

Select Your Platform

Choose your data warehouse platform:

| Platform | Status | Authentication |

|---|---|---|

| Snowflake | ✅ Available | User/Password, OAuth |

| Databricks | ✅ Available | Personal Access Token |

| Google BigQuery | ✅ Available | Service Account JSON |

| Azure Synapse | ✅ Available | SQL Auth, Azure AD |

| PostgreSQL | ✅ Available | User/Password, SSL |

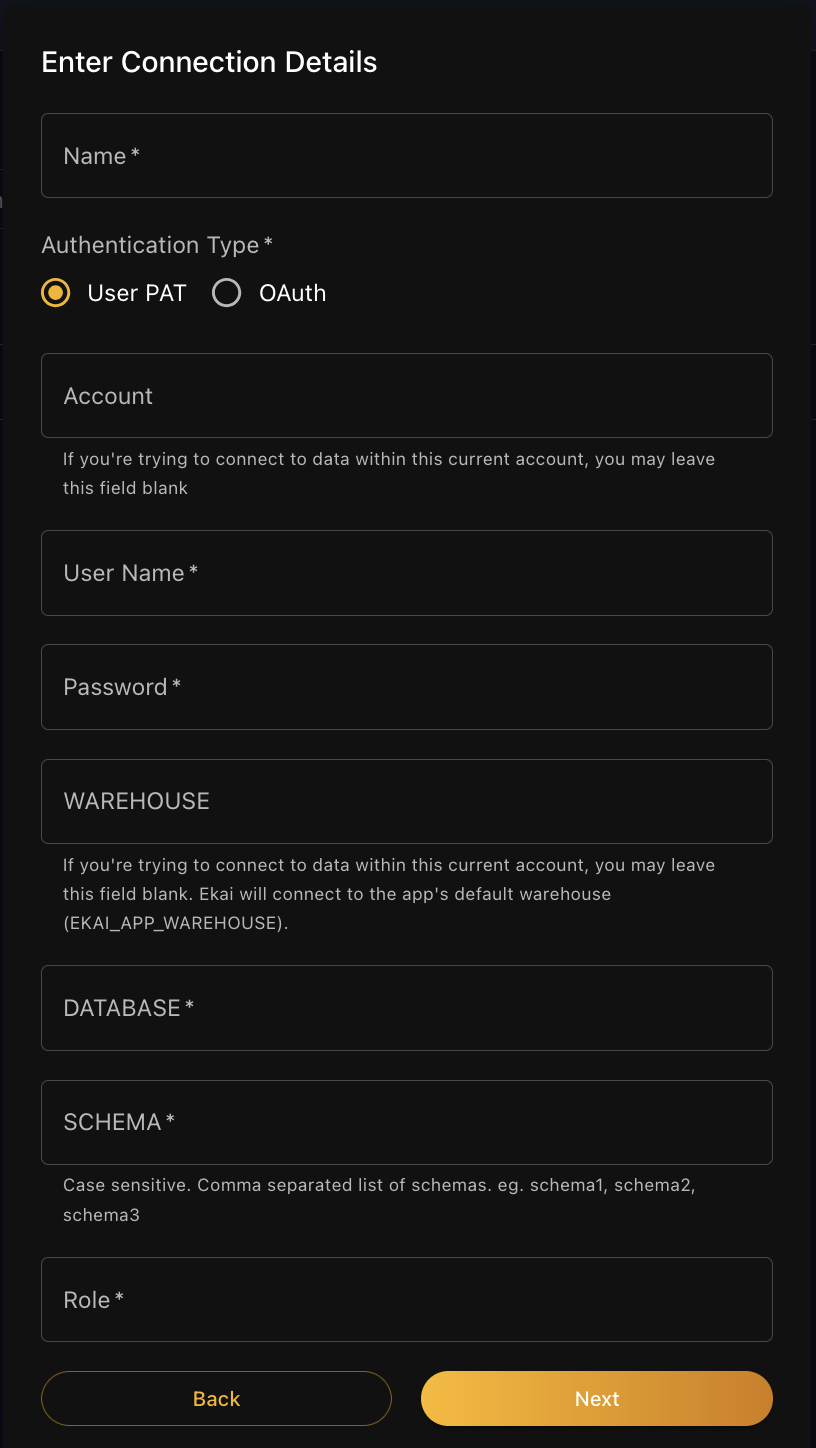

Connection Details

- Snowflake

- Databricks

- BigQuery

- Azure Synapse

- PostgreSQL

Required Fields:

| Field | Description |

|---|---|

| Name | Friendly name for this connection |

| Authentication Type | User PAT or OAuth |

| User Name | Snowflake username |

| Password | Snowflake password |

| DATABASE | Target database name |

| SCHEMA | Comma-separated list of schemas (case-sensitive) |

| Role | Snowflake role with read access |

Optional Fields:

- Account: Leave blank if connecting within Snowflake Native App

- WAREHOUSE: Leave blank to use default

EKAI_APP_WAREHOUSE

Enter schemas as a comma-separated list: schema1, schema2, schema3

Required Fields:

| Field | Description |

|---|---|

| Name | Friendly name for this connection |

| Workspace URL | Your Databricks workspace URL |

| SQL Warehouse/Cluster | Compute resource for queries |

| Catalog | Unity Catalog name |

| Schema | Target schema(s) |

| Personal Access Token | PAT for authentication |

Required Fields:

| Field | Description |

|---|---|

| Name | Friendly name for this connection |

| Project ID | GCP project ID |

| Dataset | Target dataset name |

| Service Account JSON | Upload or paste JSON key |

The service account needs BigQuery Data Viewer and BigQuery Job User roles.

Required Fields:

| Field | Description |

|---|---|

| Name | Friendly name for this connection |

| Server | Synapse server name |

| Database | Target database |

| Authentication | SQL Auth or Azure AD |

| Username/Password | Credentials |

Required Fields:

| Field | Description |

|---|---|

| Name | Friendly name for this connection |

| Host | PostgreSQL server hostname |

| Port | Connection port (default: 5432) |

| Database | Target database name |

| Schema | Target schema(s) |

| Username | Database username |

| Password | Database password |

Optional Fields:

- SSL Mode: Enable SSL for encrypted connections

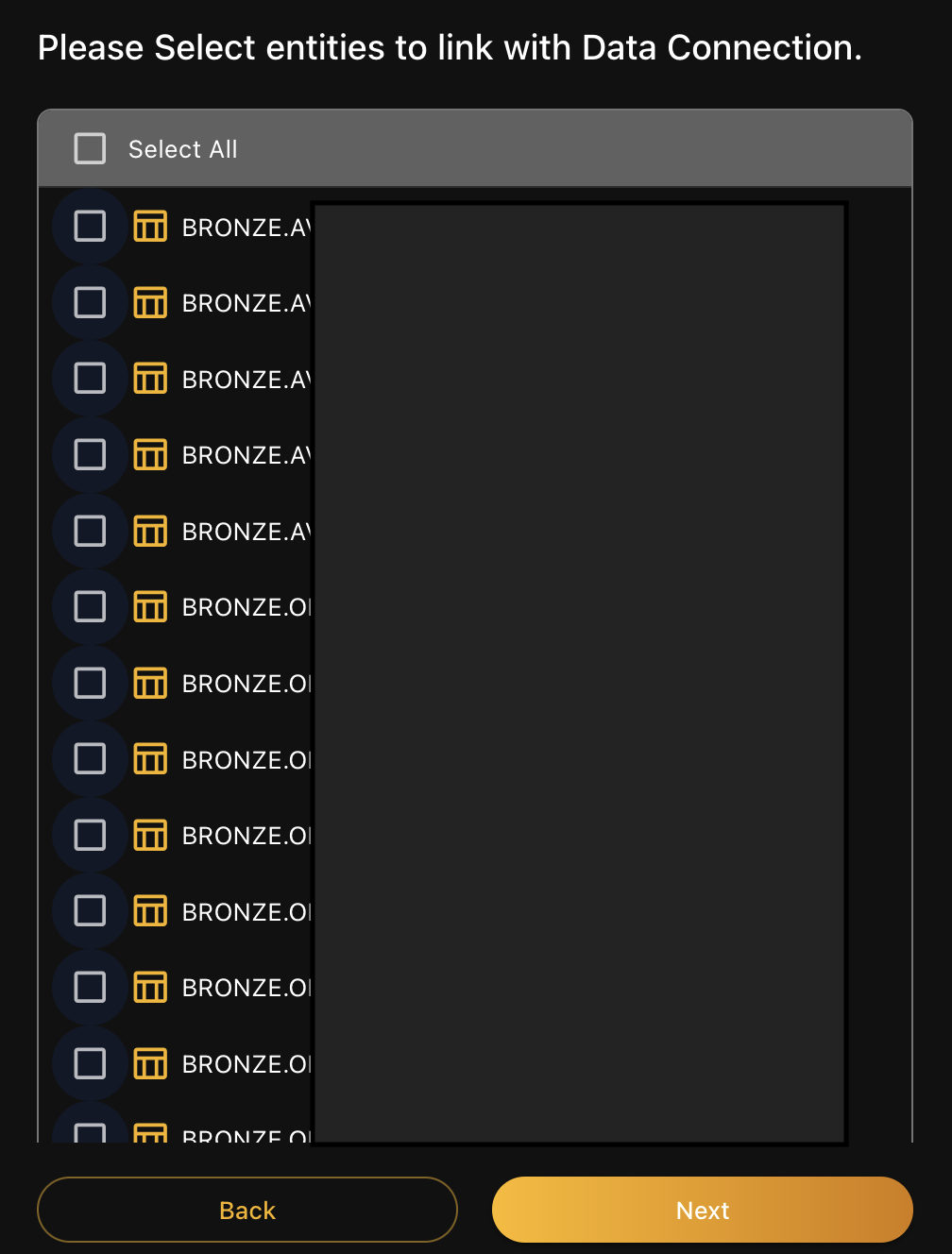

Select Tables

After entering connection details, ekai reads your schema and presents available tables:

- Select All — Include all tables in analysis

- Choose specific tables — Select individual tables

- Click Next to proceed to onboarding

LLM token consumption scales with the number of tables and columns in your connection. To reduce costs, consider limiting your selection to only the tables needed for your specific use case. For most workflows, under 100 tables provides optimal cost efficiency.

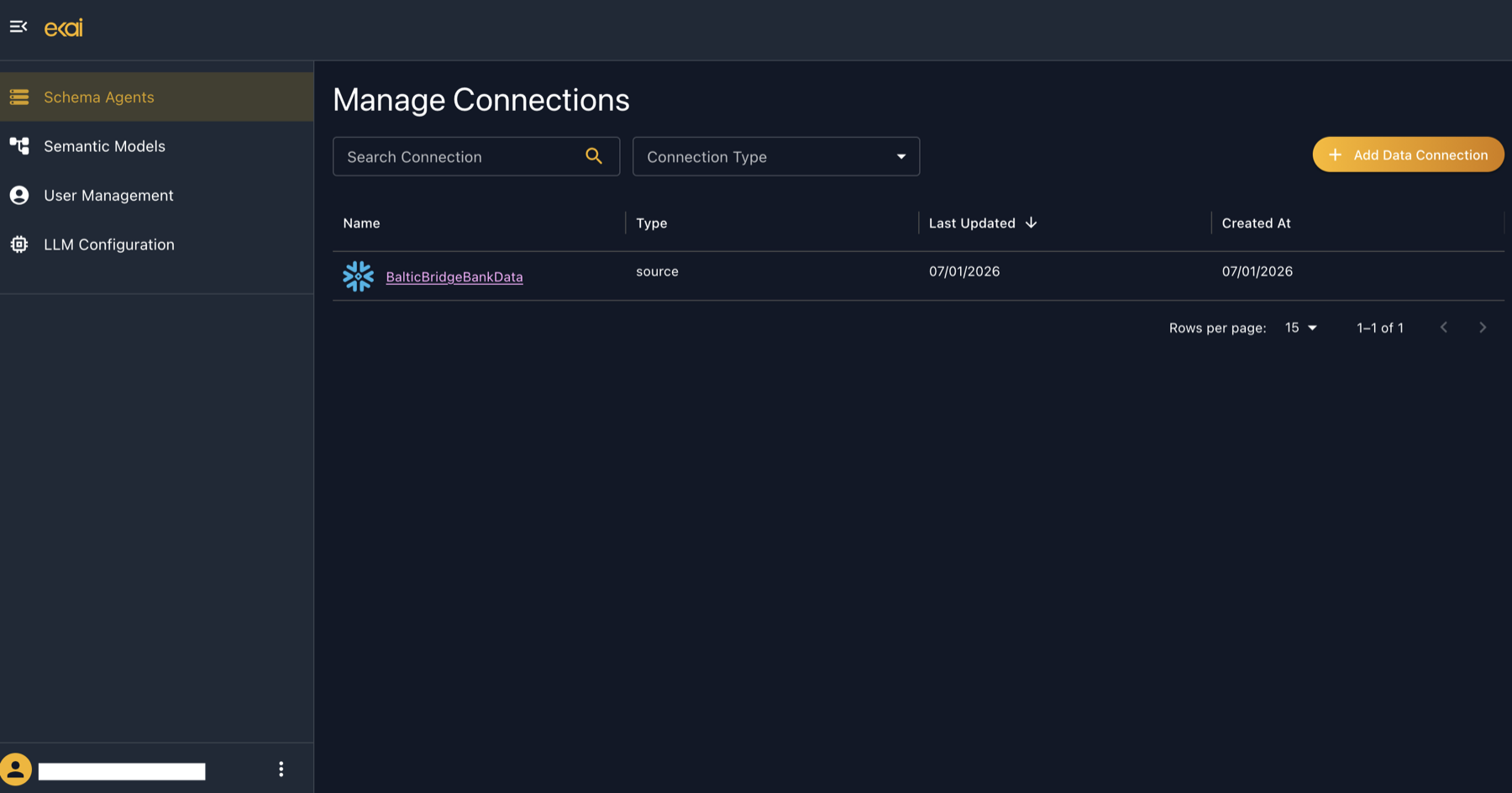

Connection Established

Your connection appears in the Schema Agents list:

The list shows:

- Name — Connection identifier (click to open)

- Type — Source platform

- Last Updated — Most recent activity

- Created At — When connection was created

Connection Security

All connections are read-only with TLS 1.3 encryption. Raw data never leaves your warehouse—only statistical summaries are transferred.

See Security & Privacy for complete details.

Managing Connections

Edit Connection

Click on a connection name to view details. Update credentials or table selections as needed.

Delete Connection

From the connection list, select the delete action to remove a connection. This does not delete generated ERDs.

Share Connection

Use the Share button to give other users access to this connection.

Next Steps

- Onboarding Agent — AI analyzes your schema

- Statistical Profiling — Data pattern analysis