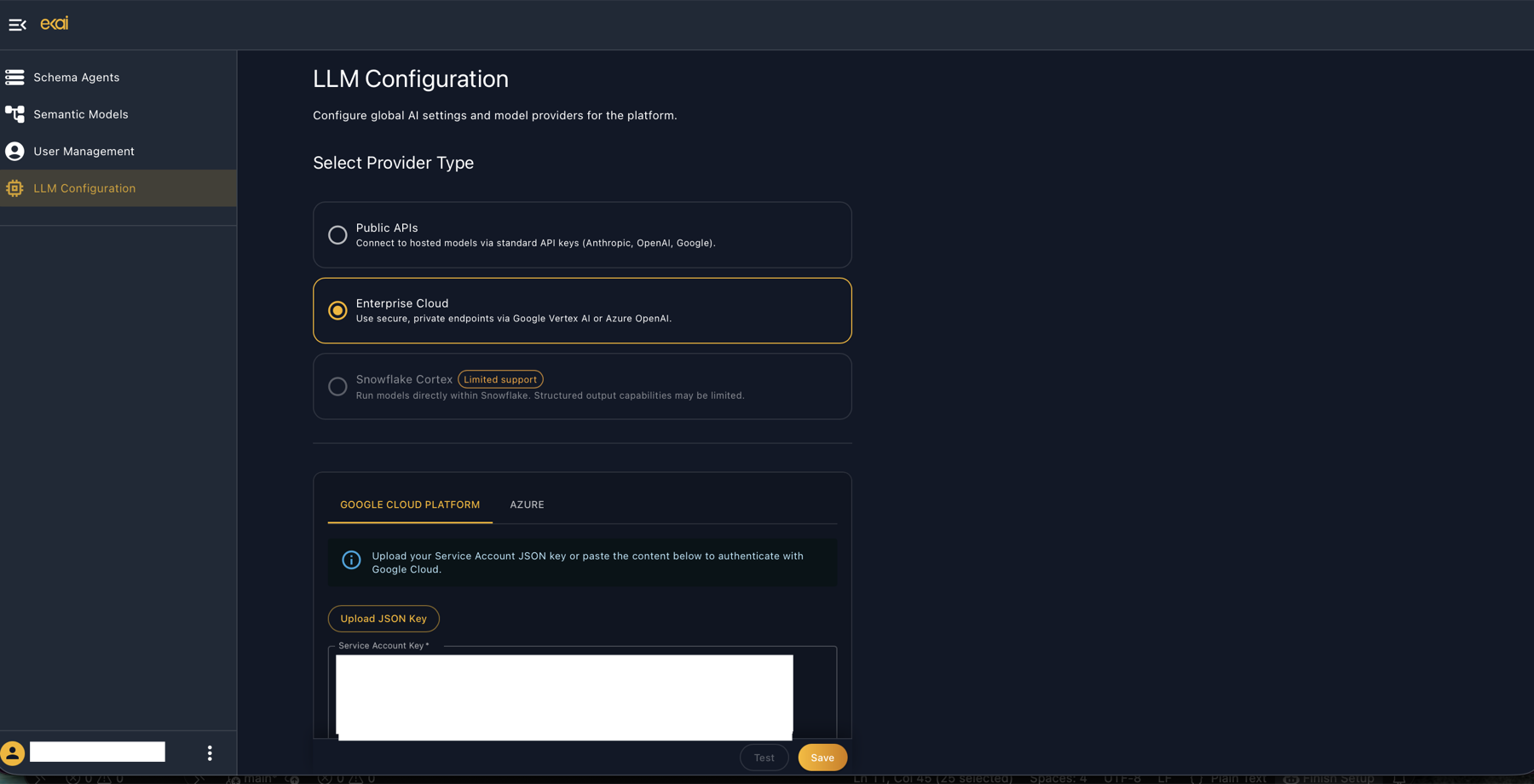

LLM Configuration

ekai uses Large Language Models (LLMs) for AI-powered features including schema onboarding, ERD generation, BRD interviews, and artifact generation. Configure your LLM provider based on your organization's security and compliance requirements.

LLM Configuration is only accessible to account Owners. Admins and Users do not see this menu item.

Configuration Screen

Navigate to LLM Configuration in the sidebar to configure your AI provider.

Provider Types

Public LLM APIs

Connect to hosted models via standard API keys.

| Provider | Models |

|---|---|

| Anthropic | Claude Sonnet 4.6 |

| OpenAI | GPT-5 mini |

| Gemini 3.1 Pro |

Use case: Quick setup, standard security requirements

Enterprise Cloud APIs

Use secure, private endpoints via your enterprise cloud accounts.

| Provider | Description |

|---|---|

| Google Vertex AI | Claude & Gemini via Google Cloud |

| Azure OpenAI | GPT models via Azure |

Use case: Enterprise security, data residency, compliance

Snowflake Cortex LLMs

Coming SoonRun models directly within Snowflake. Complete in-platform processing.

Use case: Enhanced security, Snowflake Native App deployments

Configuration Steps

Public APIs

- Navigate to LLM Configuration in the sidebar

- Select Public APIs

- Enter API keys for your chosen providers:

- Anthropic API key

- OpenAI API key

- Google AI API key

- Click Test to verify connectivity

- Click Save

Enterprise Cloud (Google Vertex AI)

- Select Enterprise Cloud

- Choose Google Cloud Platform tab

- Upload your Service Account JSON key or paste the content

- Click Test to verify connectivity

- Click Save

- Vertex AI User role

- Access to Claude and/or Gemini models

- Appropriate project permissions

Enterprise Cloud (Azure OpenAI)

- Select Enterprise Cloud

- Choose Azure tab

- Enter Azure OpenAI credentials:

- Endpoint URL

- API Key

- Deployment names

- Click Test and Save

Model Usage by Task

ekai automatically selects the optimal model for each task:

| Task | Primary Model | Fallback |

|---|---|---|

| Schema Onboarding | Claude Sonnet 4.6 | Gemini 3.1 Pro |

| ERD Generation | Claude Sonnet 4.6 | GPT-5 mini |

| BRD Interview | Claude Sonnet 4.6 | GPT-5 mini |

| Code Generation | Claude Sonnet 4.6 | GPT-5 mini |

| Validation | Gemini 3.1 Pro | GPT-5 mini |

Security Considerations

What Goes to LLM Providers

| Sent | Not Sent |

|---|---|

| Schema metadata (table/column names) | Raw data values |

| Statistical summaries | Individual records |

| Business requirement text | Sensitive business data |

| Generated code for review | Credentials or secrets |

Enterprise Cloud Benefits

- Private cloud endpoints—no public internet

- Enterprise security controls and audit logging

- Data residency compliance (regional endpoints)

- Your cloud provider's compliance certifications apply

Troubleshooting

| Issue | Solution |

|---|---|

| Test fails | Verify API key/credentials are correct and have required permissions |

| Model unavailable | Check your provider subscription includes the required models |

| Rate limiting | Enterprise Cloud APIs have higher limits; consider upgrading |

| Timeout errors | Check network connectivity to API endpoints |

Next Steps

- User Management - Configure team access

- Create Connection - Connect your warehouse

- Schema Agents Overview - Start using ekai